AI Outperforms Doctors in Landmark Microsoft Study

A new Microsoft study reveals its AI Diagnostic Orchestrator (MAI-DxO) system, which simulates a panel of medical experts, significantly outperformed human doctors in both accuracy and cost-effectiveness when diagnosing complex medical cases.

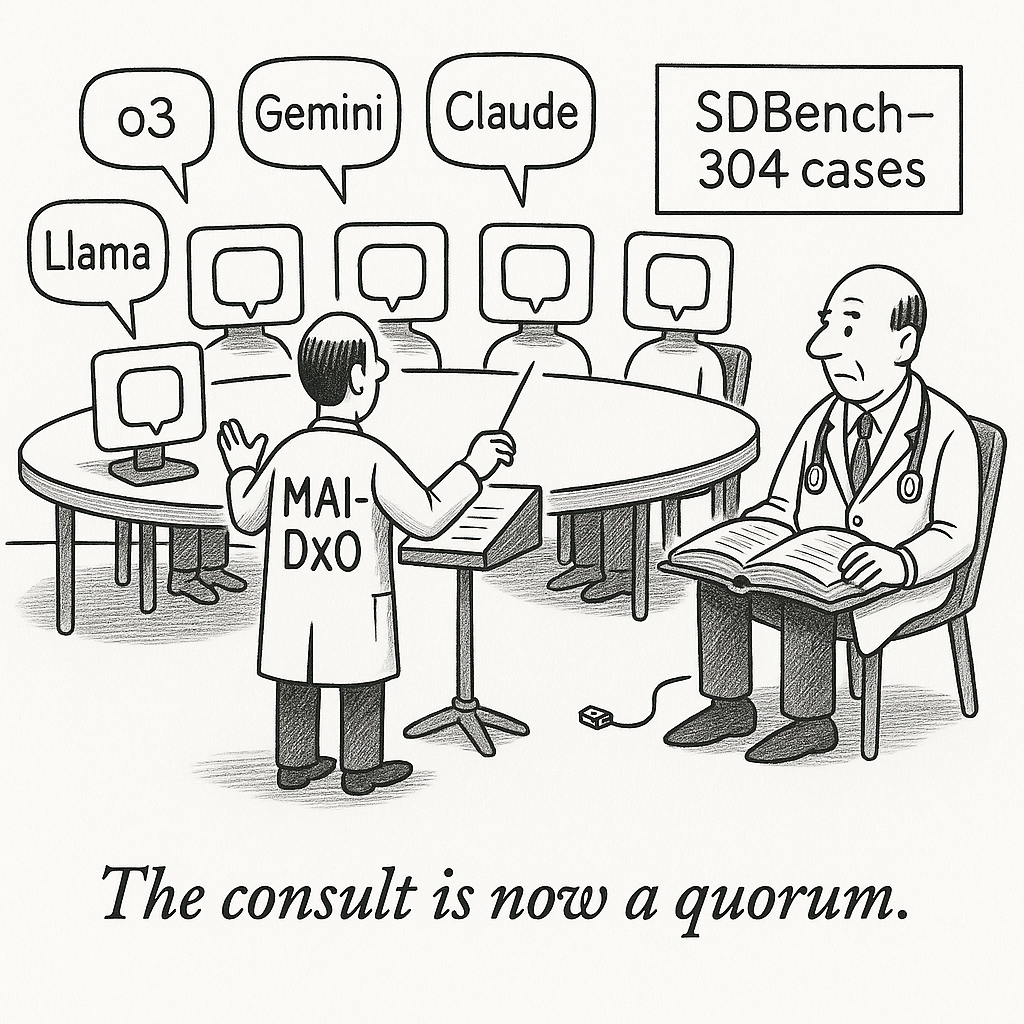

Microsoft’s artificial intelligence group has reported a research system that markedly improves diagnostic performance on difficult medical cases while also lowering estimated testing costs. The work presents the Microsoft AI Diagnostic Orchestrator (MAI-DxO), a model-agnostic controller that organizes multiple AI agents to mimic how a team of clinicians might reason together which will be positioning it as a potential advance in clinical decision support.

The evaluation centers on SDBench, a benchmark built from 304 diagnostically challenging case reports in the New England Journal of Medicine. These cases were transformed into interactive, stepwise scenarios to test sequential diagnosis. On a held-out test subset of 56 cases used for headline comparisons, MAI-DxO paired with OpenAI’s o3 model reached as high as 85.5% diagnostic accuracy, while 21 practicing physicians averaged about 20%. The broader benchmark was used to assess AI agents more generally.

Rather than introducing a new large language model, MAI-DxO coordinates existing models in a “virtual panel.” The approach improved accuracy and/or cost efficiency across multiple model families, including OpenAI, Gemini, Claude, Grok, DeepSeek, and Llama. Within the benchmark’s cost framework and based on CPT-style price proxies, the orchestrated system produced an estimated ~20% reduction in diagnostic testing costs relative to physicians and ~70% versus an un-orchestrated o3 baseline.

Several caveats temper the results. The physicians were evaluated under atypical constraints, working alone without access to colleagues, textbooks, or online resources that are routine in real practice. Although SDBench mixes common and rare conditions, its New England Journal of Medicine source skews toward unusually complex cases, and the test setting omits real-world clinical pressures and workflows.

Microsoft frames the long-term trajectory as building increasingly capable medical AI to support, not replace, clinicians by handling exhaustive data gathering and deliberation. MAI-DxO remains a research prototype and is not cleared for clinical use. Moving toward deployment will require peer review, prospective clinical studies, and regulatory assessment. Microsoft notes collaborations with leading health organizations to pursue validation.