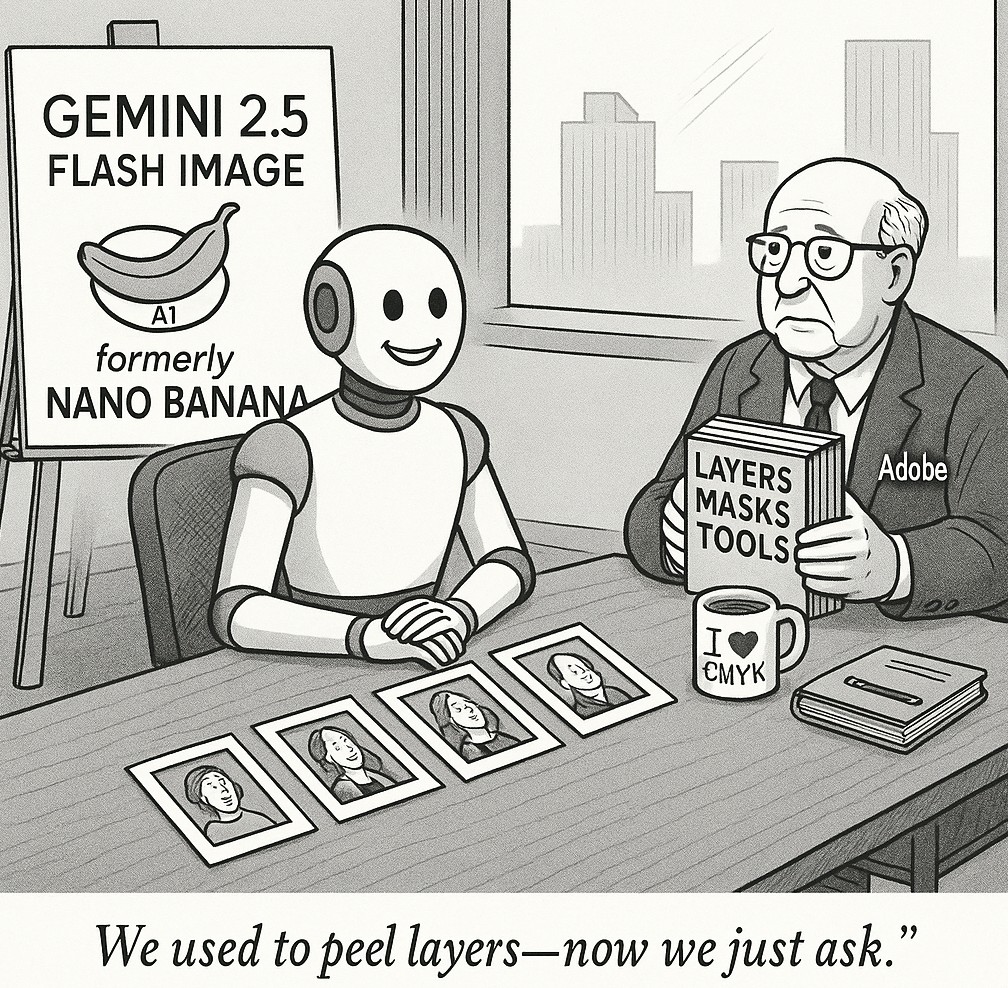

Nano Banana Is Now Available

Google’s Gemini 2.5 Flash Image, known as Nano Banana, challenges Adobe in AI image generation

A new image model has recently captured the attention of the AI community, known by the nickname “Nano Banana,” after rising to the top of public testing platforms with remarkable speed and consistency. It was later revealed as Google’s Gemini 2.5 Flash Image, a next-generation tool designed to reshape digital creativity. The model represents a direct challenge to long-established players like Adobe by shifting away from complex, tool-based editing and toward a simple, conversational workflow driven entirely by natural language. One of its most important breakthroughs is advanced identity preservation, which allows users to maintain the likeness of a person, pet, or object across multiple images and edits. This solves a long-standing problem in generative AI, making it possible to create consistent brand assets, apply new styles to a subject without altering its defining traits, or showcase a product from different perspectives. Instead of relying on traditional editing methods like layers and masks, users can type straightforward commands to perform detailed edits such as blurring a background, removing an object, or changing a subject’s pose. The model also supports multi-turn editing, remembering the context of each change to enable an iterative and conversational process. Other advanced functions include the ability to merge multiple input images into a single seamless composition, apply stylistic features such as textures and color schemes from one image to another, and even reason about content using the broader knowledge base of the Gemini family to solve hand-drawn equations or act as an educational assistant. While its strengths are clear, early testers noted that vague prompts sometimes lead to distortions and that the system struggles with turning architectural sketches into realistic visuals, indicating that Google may be focusing its efforts more on consumer and marketing applications than technical design.

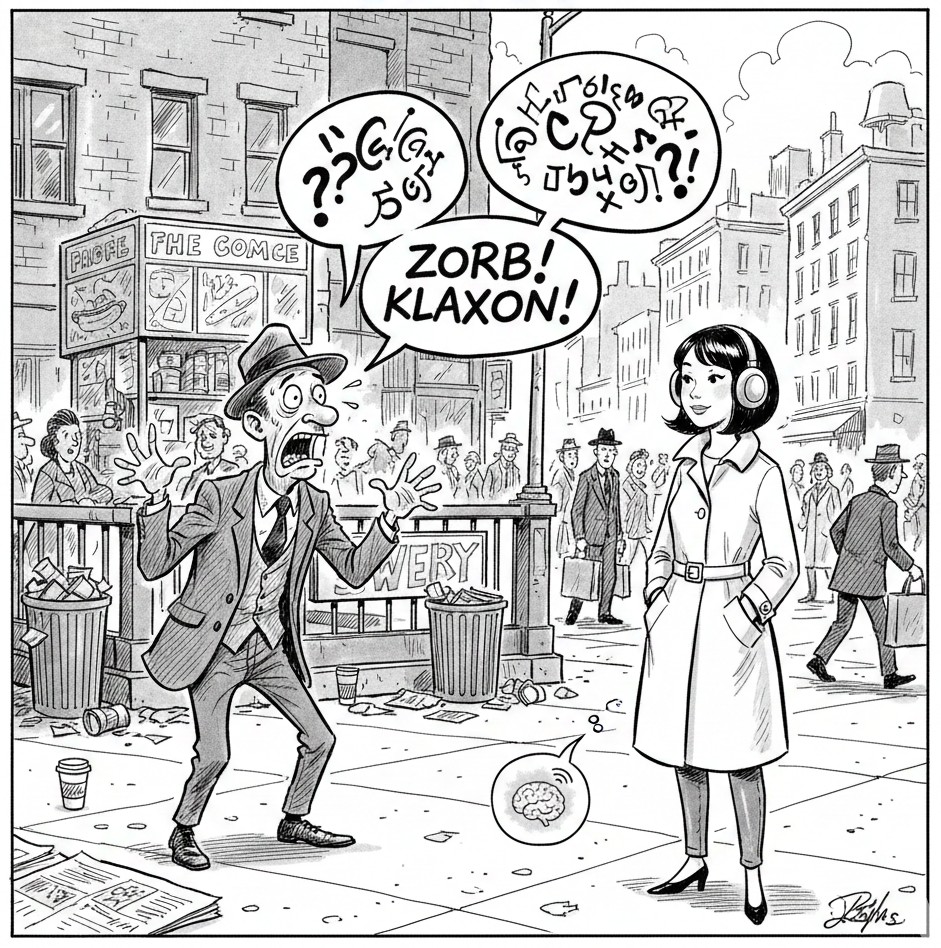

The way Google introduced Gemini 2.5 Flash Image was also a carefully planned strategy in itself. The model was first released anonymously on LMArena, where AI systems are judged in head-to-head competitions. Its repeated victories built curiosity and led to the community-given nickname “Nano Banana” well before its official unveiling. The tactic echoes Google’s launch of Gmail in 2004, when the service’s offer of one gigabyte of free storage seemed so unbelievable at the time that many assumed it was an April Fools’ Day prank. In both cases, Google used a mix of secrecy, skepticism, and surprise to generate word-of-mouth momentum and establish its product as something revolutionary.

Now positioned as a direct competitor to Adobe and Canva, Gemini 2.5 Flash Image offers professional-quality editing through plain language commands, significantly lowering the barrier to entry for creators. Businesses are already reporting measurable results, including a 34 percent increase in conversions for an e-commerce platform and major cost reductions for a gaming studio’s character art pipeline. The tool is available to free and paid users through the Gemini app and to developers via the Gemini API at a cost of about $0.039 per image. To reduce the risk of misuse, Google has implemented a layered safety system in which every generated image carries both a visible “AI” watermark and an invisible, cryptographic SynthID watermark that resists tampering. This approach builds trust with consumers, pressures rivals to follow similar practices, and positions Google ahead of potential regulations governing AI-generated content.

The emergence of Gemini 2.5 Flash Image, first known to the world as “Nano Banana,” signals Google’s broader vision for the future of creative technology. By addressing challenges like identity consistency and emphasizing a natural, conversational style of editing, the model points toward a new era of digital creation where anyone can produce professional-quality content quickly and intuitively, and where long-standing software paradigms may soon give way to more accessible, language-first workflows.